Chapter 6: The Myth of Objectivity

Scientists seek concepts and principles, not subjective perspectives. Thus, we cling to a myth of objectivity: that direct, objective knowledge of the world is obtainable, that our preconceived notions or expectations do not bias this knowledge, and that this knowledge is based on objective weighing of all relevant data on the balance of critical scientific evaluation. In referring to objectivity as a myth, I am not implying that objectivity is a fallacy or an illusion. Rather, like all myths, objectivity is an ideal -- an intrinsically worthwhile quest.

“One aim of the physical sciences has been to give an exact picture of the material world. One achievement of physics in the twentieth century has been to prove that that aim is unattainable.

“There is no absolute knowledge… All information is imperfect. We have to treat it with humility.” [Bronowski, 1973]

In this chapter we first will examine several case studies that demonstrate ways in which perception is much less objective than most people believe. Our primary means of scientific perception is visual: 70% of our sense receptors are in the eyes. Thus our considerations of perception will focus particularly on visual perception. We then will examine theories of how perception operates, theories that further undermine the fantasy of objectivity. These perspectives allow us to recognize the many potential pitfalls of subjectivity and bias, and how we can avoid them. Finally, we will address a critical question: can a group of subjective scientists achieve objective scientific knowledge?

* * *

“Things are, for each person, the way he perceives them.” [Plato, ~427-347 B.C., b]

What do the following topics have in common: football games, a car crash, flash cards, a capital-punishment quiz, relativity, and quantum mechanics? The study of each provides insight into the perception process, and each insight weakens the foundation of objectivity.

I was never much of a football fan. I agree with George Will, who said that football combines the two worst aspects of American life: it is violence punctuated by committee meetings. Yet, I will always remember two games that were played more than 20 years ago. For me, these games illuminate flaws in the concept of objective perception, suggesting instead that: 1) personal perception can control events, and 2) perceptions are, in turn, controlled by expectations.

It was a high school game. The clock was running out, and our team was slightly behind. We had driven close to the other team’s goal, then our quarterback threw a pass that could have given us victory; instead the pass was intercepted. Suddenly the interceptor was running for a touchdown, and our players merely stood and watched. All of our players were at least 20 yards behind the interceptor.

Though it was obvious to all of us that the attempt was hopeless, our star halfback decided to go after him. A few seconds later he was only two yards behind, but time had run out for making up the distance -- the goal was only 10 yards ahead. Our halfback dived, and he chose just the right moment. He barely tapped his target’s foot at the maximum point of its backward lift. The halfback cratered, and the interceptor went on running. But his stride was disrupted, and within two paces he fell -- about two yards short of a touchdown.

I prefer to think that our team was inspired by this event, that we stopped the opponents’ advance just short of a touchdown, and that we recovered the ball and drove for the winning touchdown. Indeed, I do vaguely remember that happening, but I am not certain. I remember that the halfback went on to become an All Star. Of this I am certain: I could read the man’s thoughts, those thoughts were “No, damn it, I refuse to accept that,” and willpower and a light tap at exactly the right moment made an unforgettable difference.

* * *

The game that affected me most I never saw. I read about it in a social anthropology journal article 27 years ago. The paper, called ‘They saw a game,’ concerned a game between Harvard and perhaps Yale or Dartmouth. The authors, whose names I don’t remember, interviewed fans of both teams as they left the game.

Everyone agreed that the game was exceedingly dirty, and the record of the referees’ called fouls proves that assertion. Beyond that consensus, however, it was clear that fans of the two teams saw two different games. Each group of fans saw the rival team make an incredibly large number of fouls, many of which the referees ‘missed’. They saw their own team commit very few fouls, and yet the referees falsely accused their team of many other fouls. Each group was outraged at the bias of the referees and at the behavior of the other team.

The authors’ conclusion was inescapable and, to a budding scientist imbued with the myth of scientific objectivity, devastating: expectations exert a profound control on perceptions. Not invariably, but more frequently than we admit, we see what we expect to see, and we remember what we want to remember.

Twenty-three years later, I found and reread the paper to determine how accurate this personally powerful ‘memory’ was. I have refrained from editing the memory above. Here, then, are the ‘actual’ data and conclusions, or at least my current interpretation of them.

In a paper called ‘They saw a game: a case study,’ Hastorf and Cantril [1954] analyzed perceptions of a game between Dartmouth and Princeton. It was a rough game, with many penalties, and it aroused a furor of editorials in the campus newspapers and elsewhere, particularly because the Princeton star, in this, his last game for Princeton, had been injured and was unable to complete the game. One week after the game, Hastorf and Cantril had Dartmouth and Princeton psychology students fill out a questionnaire, and the authors analyzed the answers of those who had seen either the game or a movie of the game. They had two other groups view a film of the game and tabulate the number of infractions seen.

The Dartmouth and Princeton students gave discrepant responses. Almost no one said that Princeton started the rough play; 36% of the Dartmouth students and 86% of the Princeton students said that Dartmouth started it; and 53% of the Dartmouth students and 11% of the Princeton students said that both started it. But most significantly, out of the group who watched the film, the Princeton students saw twice as many Dartmouth infractions as the Dartmouth students did.

Hastorf and Cantril interpreted these results as indicating that, when encountering a mix of occurrences as complex as a football game, we experience primarily those events that fulfill a familiar pattern and have personal significance.

Hastorf and Cantril [1954] conclude: “In brief, the data here indicate that there is no such ‘thing’ as a ‘game’ existing ‘out there’ in its own right which people merely ‘observe.’”

Was my memory of this paper objective, reliable, and accurate? Apparently, various aspects had little lasting significance to me: the teams, article authors, the question of whether the teams were evenly guilty or Dartmouth was more guilty of infractions, the role of the Princeton star in the debate, and the descriptive jargon of the authors. What was significant to me was the convincing evidence that the two teams ‘saw’ two different games and that these experiences were related to the observers’ different expectations: I remembered this key conclusion correctly.

I forgot the important fact that the questionnaires were administered a week after the game rather than immediately after, with no attempt to distinguish the effect of personal observation from that of biasing editorials. As every lawyer knows, immediate witness accounts are less biased than accounts after recollection and prompting. I forgot that there were also two groups who watched for infractions as they saw a film, and that these two groups undoubtedly had preconceptions concerning the infractions before they saw the film.

The experiment is less convincing now than it was to me as an undergraduate student. Indeed, it is poorly controlled by modern standards, yet I think that the conclusions stand unchanged. The pattern of my selective memory after 23 years is consistent with these conclusions.

I have encountered many other examples of the subjectivity and bias of perception. But it is often the first unavoidable anomaly that transforms one’s viewpoints. For me, this football game -- although hearsay evidence -- triggered the avalanche of change.

Hastorf and Cantril [1954] interpreted their experiment as evidence that “out of all the occurrences going on in the environment, a person selects those that have some significance for him from his own egocentric position in the total matrix.” Compare this ‘objective statistical experimental result’ to the much more subjective experiment and observation of Loren Eiseley [1978]:

“Curious, I took a pencil from my pocket and touched a strand of the [spider] web. Immediately there was a response. The web, plucked by its menacing occupant, began to vibrate until it was a blur. Anything that had brushed claw or wing against that amazing snare would be thoroughly entrapped. As the vibrations slowed, I could see the owner fingering her guidelines for signs of struggle. A pencil point was an intrusion into this universe for which no precedent existed. Spider was circumscribed by spider ideas; its universe was spider universe. All outside was irrational, extraneous, at best raw material for spider. As I proceeded on my way along the gully, like a vast impossible shadow, I realized that in the world of spider I did not exist.”

Stereotypes, of football teams or any group, are an essential way of organizing information. An individual can establish a stereotype through research or personal observation, but most stereotypes are unspoken cultural assumptions [Gould, 1981]. Once we accept a stereotype, we reinforce it through what we look for and what we notice.

The danger is that a stereotype too easily becomes prejudice -- a stereotype that is so firmly established that we experience the generalization rather than the individual, regardless of whether or not the individual fits the stereotype. When faced with an example that is inconsistent with the stereotype, the bigot usually dismisses the example as somehow non-representative. The alternative is acceptance that the prejudice is imperfect in its predictive ability, a conclusion that undermines one’s established world-view [Goleman, 1992c].

Too often, this process is not a game. When the jury verdict in the O.J. Simpson trial was announced, a photograph of some college students captured shock on every white face, joy on every black face; different evidence had been emphasized. The stakes of prejudice can be high, as in the following example from the New York Times.

“JERUSALEM, Jan. 4 -- A bus driven by an Arab collided with

a car and killed an Israeli woman today, and the bus driver was then shot

dead by an Israeli near the Gaza Strip.

“Palestinians and Israelis gave entirely different versions of the episode,

agreeing only that the bus driver, Mohammed Samir al-Katamani, a 30-year-old

Palestinian from Gaza, was returning from Ashkelon in a bus without passengers

at about 7 A.M. after taking families of Palestinian prisoners to visit them

in jail.

“The bus company spokesman, Mohammed Abu Ramadan, said the driver had

accidentally hit the car in which the woman died. He said the driver became

frightened after Israelis surrounded the bus and that he had grabbed a metal

bar to defend himself.

“But Moshe Caspi, the police commander of the Lachish region, where the

events took place, said the driver had deliberately rammed his bus into several

cars and had been shot to death by a driver of one of those vehicles.

“The

Israeli radio account of the incident said the driver left the bus shouting

‘God is great!’ in Arabic and holding a metal bar in his hand as

he tried to attack other cars.” [Ibrahim, 1991]

* * *

“What a man sees depends both upon what he looks at and also upon what his previous visual-conceptual experience has taught him to see.” [Kuhn, 1970]

The emotional relationship between expectation and perception was investigated in an elegantly simple and enlightening experiment by Bruner and Postman [1949]. They flashed images of playing cards in front of a subject, and the subject was asked to identify the cards. Each card was flashed several times at progressively longer exposures. Some cards were normal, but some were bizarre (e.g., a red two of spades).

Subjects routinely identified each card after a brief exposure, but they failed to notice the anomaly. For example, a red two of spades might be identified as a two of spades or a two of hearts. As subjects were exposed more blatantly to the anomaly in longer exposures, they began to realize that something was wrong but they still had trouble pinpointing the problem. With progressively longer exposures, the anomalous cards eventually were identified correctly by most subjects. Yet nearly always this period between recognition of anomaly and identification of anomaly was accompanied by confusion, hesitation, and distress. Kuhn [1970] cited a personal communication from author Postman that even he was uncomfortable looking at the bizarre cards. Some subjects never were able to identify what was wrong with the cards.

The confusion, distress, and near panic of attempting to deal with observations inconsistent with expectations was eloquently expressed by one subject:

“I can’t make the suit out, whatever it is. It didn’t even look like a card that time. I don’t know what color it is now or whether it’s a spade or a heart. I’m not even sure now what a spade looks like. My God!”

* * *

Let us now consider a critical aspect of the relationship between expectation and perception: how that relationship is reinforced. Lord et al. [1979] investigated the evolution of belief in an hypothesis. They first asked their subjects to rate how strongly they felt about capital punishment. Then they gave each subject two essays to read: one essay argued in favor of capital punishment and one argued against it. Subsequent quizzing of the subjects revealed that they were less critical of the essay consistent with their views than with the opposing essay. This result is an unsurprising confirmation of the results of ‘They saw a game’ above.

The surprising aspect of Lord et al.’s [1979] finding was this: reading the two essays tended to reinforce a subject’s initial opinion. Lord et al. [1979] concluded that examining mixed, pro-and-con evidence further polarizes initial beliefs. This conclusion is particularly disturbing to scientists, because we frequently depend on continuing evaluation of partially conflicting evidence.

In analyzing this result, Kuhn et al. [1988] ask the key question: what caused the polarization to increase? Was it the consideration of conflicting viewpoints as hypothesized by Lord et al. [1979] or was it instead the incentive to reconsider their beliefs? Kuhn et al. [1988] suspect the latter, and suggest that similar polarization might have been obtained by asking the subjects to write an essay on capital punishment, rather than showing them conflicting evidence and opinions. I suspect that both interpretations are right, and both experiments would increase the polarization of opinions. Whether one is reading ‘objective’ pro-and-con arguments or is remembering evidence, one perceives a preponderance of confirming evidence.

Perception strengthens opinions, and perception is biased in favor of expectations.

* * *

Though the preceding case studies demonstrate that perception is much less objective and much more belief-based than we thought, they allow us to maintain faith in such basic perceptual assumptions as time and causality. Yet the next studies challenge even those assumptions.

“Henceforth space by itself, and time by itself, are doomed to fade away into mere shadows, and only a kind of union of the two will preserve an independent reality.”

With these stunning initial words, the Russo-German mathematician Hermann Minkowski [1908] began a lecture explaining his concept of space-time, an implication of Albert Einstein’s 1905 concept of special relativity.

Einstein assumed two principles: relativity, which states that no conceivable experiment would be able to detect absolute rest or uniform motion; and that light travels through empty space with a speed c that is the same for all observers, independent of the motion of its source. Faced with two incompatible premises such as universal relative motion yet absolute motion for light, most scientists would abandon one. In contrast, Einstein said that the two principles are “only apparently irreconcilable,” and he instead challenged a basic assumption of all scientists -- that time is universal. He concluded that the simultaneity of separated events is relative. In other words, if two events are simultaneous to one observer, then they are not simultaneous to a second observer at a different location. Clocks in vehicles going at different speeds do not run at the same speed.

Although Einstein later relaxed the assumption of constant light velocity when he subsumed special relativity into general relativity in 1917, our concept of an objective observer’s independence from what is observed was even more shaken. Space and time are, as Minkowski suggested, so interrelated that it is most appropriate to think of a single, four-dimensional, space-time. Indeed, modern physics finds that some atomic processes are more elegant and mathematically simple if we assume that time can flow either forward or backward. Gravity curves space-time, and observation depends on the motion of the observer.

* * *

Even if, as Einstein showed, observation depends on the motion of the observer, cannot we achieve objective certainty simply by specifying both? Werner Heisenberg [1927], in examining the implications of quantum mechanics, developed the principle of indeterminacy, more commonly known as “the Heisenberg uncertainty principle.” He showed that indeterminacy is unavoidable, because the process of observation invariably changes the observed object, at least minutely.

The following thought experiments demonstrate the uncertainty principle. We know that the only way to observe any object is by bouncing light off of it. In everyday life we ignore an implication of this simple truth: bouncing light off of the object must impart energy and momentum to the object. Analogously, if we throw a rock at the earth we can ignore the fact that the earth’s orbit is minutely deflected by the impact. But what if we bounce light, consisting of photons, off of an electron? Photons will jolt the electron substantially. Our observation invariably and unavoidably affects the object being observed. Therefore, we cannot simultaneously know both where the electron is and what its motion is. Thus we cannot know exactly where the particle will be at any time in the future. The more accurately we measure its location, the less accurately can we measure its momentum, and vice versa.

“Natural science does not simply describe and explain nature; … it describes nature as exposed to our method of questioning.” [Heisenberg, 1958]

|

|

Our concepts of reality have already been revised by Einstein, Bohr, Heisenberg, and other atomic physicists; further revisions seem likely. We now know that observations are relative to the observer, that space is curved and time is relative, that observation unavoidably affects the object observed, that probability has replaced strict determinism, and that scientific certainty is an illusion. Is the observational foundation of science unavoidably unreliable, as some non-scientists have concluded?

Seldom can we blame uncontrolled observer-object interaction on atomic physics. The problem may be unintentional, it may be frequent (see the later section on ‘Pitfalls of Subjectivity’), but it is probably avoidable. In our quest for first-order phenomena (such as controls on the earth’s orbit), we can safely neglect trivial influences (such as a tossed rock).

Scientists are, above all, pragmatists. In practice, those of us who are not theoretical particle physicists or astronomers safely assume that time is absolute, that observation can be independent of the object observed, and that determinism is possible. For the vast majority of experimental situations encountered by scientists, these assumptions, though invalid, are amazingly effective working hypotheses. If we are in error by only one quantum, it is cause for celebration rather than worry. The fundamental criterion of science is, after all, what works.

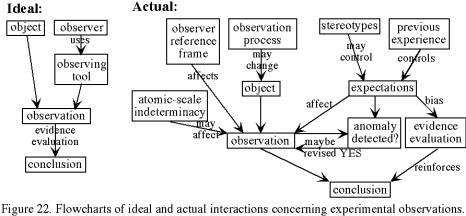

We cannot, however, cling to the comfortable myth of detached observation and impartial evaluation of objectively obtained evidence. The actual process is much more complex and more human (Figure 22):

Expectations, rooted in previous experience or in stereotypes, exert a hidden influence usually even a control on both our perceptions and our evaluation of evidence. We tend to overlook or discount information that is unexpected or inconsistent with our beliefs. Anomaly, once recognized, can transform our perspectives fundamentally, but not without an emotional toll: “My God!” was the subject’s response, when the stakes were only recognition of a playing card!

|

|

“Twenty men crossing a bridge,

Into a village,

Are twenty men crossing twenty bridges,

Into twenty villages.”

[Wallace Stevens, 1931]

The scientific challenge is to use admittedly subjective means, coupled with imperfect assumptions, yet achieve an ‘objective’ recognition of patterns and principles. To do so, we must understand the limitations of our methods. We must understand how the perception process and memory affect our observations, in order to recognize our own biases.

* * *

Perception, Memory, and Schemata

Perception and memory are not merely biased; even today, they are only partially understood. In the mid-17th century René Descartes took the eye of an ox, scraped its back to make it transparent, and looked through it. The world was inverted. This observation confirmed Johannes Kepler’s speculation that the eye resembles a camera, focusing an image on its back surface with a lens [Neisser, 1968]. Of course, ‘camera’ had a different meaning in the 17th century than it does today; it was a black box with a pinhole aperture, and it used neither lens nor film. Nevertheless, the analogy between camera and eye was born, and it persists today.

If the eye is like a camera, then is memory like a photograph? Unfortunately it is not; the mistaken analogy between memory and photographs has delayed our understanding of memory. The eye does not stand still to ‘expose’ an image; it jumps several times per second, jerkily focusing on different regions. The fovea, the portion of eye’s inner surface capable of the highest resolution, sharply discerns only a small portion of the field of view. A series of eye jerks constructs a composite, high-resolution image of the interesting portion of the visual field.

Consciousness and memory do not record or notice each jerky ‘exposure’. Indeed, if we were to make a motion picture that jumped around the way the eye does, the product would be nerve-wracking or nauseating. Our vision does not bother us as this movie would, because attention controls the focus target; attention does not passively follow the jumps.

A closer analogy to functioning of the eye and mind is the photomosaic, a composite image formed by superimposing the best parts of many overlapping images. To make a photomosaic of a region from satellite photographs, analysts do not simply paste overlapping images. They pick the best photo for each region, discarding the photos taken during night or through clouds. Perhaps the eye/mind pair acts somewhat similarly; from an airplane it can put together an image of the landscape, even through scattered small clouds. Furthermore, it can see even with stroboscopic light. Both motion pictures and fluorescent light are stroboscopic; we do not even notice because of the high frequency of flashes.

Julian Hochberg suggested a simple exercise that offers insight into the relationship of vision to memory: remember how many windows are on the front of your house or apartment house [Neisser, 1968]. You may be able to solve this problem ‘analytically’, by listing the rooms visible from the front and remembering how many windows face front in each room. Instead, you probably will use more obvious visualization, creating a mental image of the front of your house, and then scanning this image while counting windows. This mental image does not correspond to any single picture that the eye has ever seen. There may be no single location outside your house where you could stand and count every window; trees or bushes probably hide some windows.

During the last thousand years, various cultures have grappled with the discrepancy between construct and reality, or that between perspective and reality. More than six centuries before Descartes’ experiment with the eye of an ox, Arab scientists were making discoveries in optics. Alhazen, who wrote Optics [~1000 A.D.], realized that vision consists of light reflecting off of objects and forming a cone of light entering the eye. This first step toward an understanding of perspective was ignored by western scientists and artists. Artists tried to paint scenes as they are rather than as they appear to the eye. Beginning in the 15th century and continuing into the 16th century, artists such as Filippo Brunelleschi began to deliberately use Arab and Greek theories of perspective to make paintings appear more lifelike.

The perspective painting is lifelike in its mimicry of the way an eye or camera sees. In contrast, the older attempts to paint objects ‘as they are’ are more analogous to mental schemata. In this sense, the evolution of physics has paralleled that of art. Newton described dynamics as a picture of ‘how things are’, rather than how they appear to the observer. In contrast, Einstein demonstrated that we can only know things from some observer’s perspective.

* * *

The electrical activity in the brain is not designed to store a photograph in a certain location, the way that one can electrically (or magnetically) store a scanned image in a computer. True, visual imaging is generally located in one part of the brain called the visual cortex, but removal of any small part of the brain does not remove individual memories and leave all others unchanged; it may weaken certain types of memories. Memories are much more complex than mental images. They may include visual, auditory, and other sensual data along with emotional information. Recollection involves their simultaneous retrieval from different parts of the brain. A memory consists of mental electrical activity, more analogous to countless conductors in parallel than to a scanned image. However, this analogy offers little or no real insight into how the mind/brain works.

A more illuminating perspective on both perception and memory is the concept of schemata, the meaningful elements of our visual environment. You or I can identify a friend’s face in a group photo in seconds or less. In contrast, if you had to help someone else find your friend by describing the friend’s characteristics, identification would be dilatory and uncertain. Evolutionary pressure favors fast identification: survival may depend on rapid recognition of predators. Obviously our pattern recognition techniques, whether of predators or friends, are extremely efficient and unconscious. Schemata achieve that needed speed.

Schemata are the building blocks of memories and of pattern recognition. An individual schema is somewhat like one of Plato’s forms: an idealized representation of an object’s essence. It may not match any specific object that we have seen. Instead, it is a composite constructed from our history of observing and recognizing examples of the object. A group of neural pathways fires during our first exposure to the object, thereby becoming associated with the object. Later recognition of the object triggers re-firing along these paths.

Schemata are somewhat like the old rhyme,

“Big fleas have little fleas

On their backs to bite 'em,

And little fleas have smaller fleas

And so ad infinitum.”

Even a schema as ‘simple’ as pencil is made up of many other schemata: textural (e.g., hard), morphological (e.g., elongated, cylindrical, conical-ended), and especially functional. And an individual schema such as cylindrical is composed of lower-level schemata. Another example of a schema is a musical composition, composed of lower-level patterns of a few bars each, and -- at the lowest level -- notes.

It all sounds hopelessly cumbersome, but the process is an elegantly efficient flow of electrical currents in parallel. Identification of a pattern does not require identification of all its elements, and identification of a higher-level schema such as pencil does not await identification of all the lower-level schemata components. If I see a snake, then I do not have the luxury of a casual and completely reliable identification: the schemata sinuous, cylindrical, 6" to 6' (however that information is stored as schema), and moving may trigger a jump response before I realize why I am jumping. I’ve seen a cat jump straight up into the air on encountering a sinuous stick.

* * *

We filter out all but the tiniest fraction of our sensory inputs; otherwise we would go mad. One by one, we label and dismiss these signals, unconsciously telling them, “You’re not important; don’t bother me.”

“Novelty itself will always rivet one’s attention. There is that unique moment when one confronts something new and astonishment begins. Whatever it is, it looms brightly, its edges sharp, its details ravishing, in a hard clear light; just beholding it is a form of revelation, a new sensory litany. But the second time one sees it, the mind says, Oh, that again, another wing walker, another moon landing. And soon, when it’s become commonplace, the brain begins slurring the details, recognizing it too quickly, by just a few of its features.” [Ackerman, 1990]

Schema modification begins immediately after first exposure, with a memory replay of the incident and the attempt to name it and associate it with other incidents. Schema development may be immediate or gradual, and this rate is affected by many factors, particularly emotion and pain. Consider the following three experiments:

A slug is fed a novel food, and then it is injected with a chemical that causes it to regurgitate. From only this one learning experience, it will immediately react to every future taste of this food by regurgitating. [Calvin, 1986]

A rat is trained to press a lever for food. If it receives food every time that it presses the lever, it will learn much faster than if it is only intermittently reinforced.

A rat is trained to press a lever for food. Randomly, however, it receives a shock rather than food when it presses the lever. If this is the only food source, the rat will continue to press the lever, but it is likely to develop irrational behavior (e.g., biting its handler) in other respects.

Emotional content and conflict enhance schema formation and memory:

Consider two nearly identical physics lectures on ballistics. The only difference is that the instructor begins one by silently loading a gun and placing it on the lectern so that it is visible to the students throughout the lecture. Which class will remember ballistics better?

A schema may include non-relevant aspects, particularly those neural patterns that happened to be flowing during the first experience of the schema. Thus is superstition born, and in Chapter 4 we discussed the associated inductive fallacy of ‘hasty generalization.’ Non-relevant aspects, once established in the schema, are slow to disappear if one is not consciously aware of them. For example, a primary aim of psychotherapy is identification of behavior patterns formed in childhood and no longer appropriate. Deliberate schema modification can backfire:

I once decided to add some enjoyment to the chore of dish washing by playing my favorite record whenever I washed dishes. It helped for a while, but soon I found that listening to that music without washing dishes seemed more like work than like recreation. I had created an associative bridge between the two sets of neural patterns, so that each triggered the emotional associations of the other.

Identification of a pattern does not require an exact match with the schema. Because we are identifying the schema holistically rather than by listing its components, we may not notice that some components are missing. Nor are extra, noncharacteristic components always detected. For example, people tend to overlook aspects of their own lives that are inconsistent with their current self-image [Goleman, 1992b]. The conditioned schema of previous experiences adds the missing component or ignores the superfluous component.

Memory can replay one schema or a series of schemata without any external cues, simply by activating the relevant neural pathways. Memory recollects the holistic schema that was triggered by the original experience; it does not replay the actual external events. Missing or overlooked elements of the schema are ignored in the memories. Thus eyewitness testimony is notoriously poor. Thus scientists trust their written records much more than their recollections of an experiment. Neural pathways are reinforced by any repeat flow -- real or recalled. Each recollection has the potential of modifying a memory. I may think that I am remembering an incident from early childhood, but more likely I am actually recalling an often-repeated recollection rather than the initial experience. Recollection can be colored by associated desire. Some individuals are particularly prone to self-serving memory, but everyone is affected to some degree. Thus, when I described the first football game near the start of this chapter, I said:

“I prefer to think that our team was inspired by this event, that we stopped the opponents’ advance just short of a touchdown, and that we recovered the ball and drove for the winning touchdown. Indeed, I do vaguely remember that happening, but I am not certain.”

The individual always attempts schema identification based on available cues, whether or not those cues are sufficient for unique identification. In such cases the subconscious supplies the answer that seems most plausible in the current environment. Ittelson and Kilpatrick [1951] argue persuasively that optical illusions are simply a readily investigated aspect of the much broader phenomenon of subconsciously probabilistic schema identification.

“Resulting perceptions are not absolute revelations of ‘what is out there’ but are in the nature of probabilities or predictions based on past experience. These predictions are not always reliable, as the [optical illusion] demonstrations make clear.” [Ittelson and Kilpatrick, 1951]

“Perception is not determined simply by the stimulus patterns; rather it is a dynamic searching for the best interpretation of the available data… Perception involves going beyond the immediately given evidence of the senses: this evidence is assessed on many grounds and generally we make the best bet… Indeed, we may say that a perceived object is a hypothesis, suggested and tested by sensory data.” [Gregory, 1966]

* * *

No wonder expectations affect our observations! With a perceptual system geared to understanding the present by matching it to previous experience, of course we are prone to see what we expect to see, overlook anomalies, and find that evidence confirms our beliefs (Figure 22). Remarkably, the scientist’s propensity for identifying familiar patterns is combined with a hunger for discovering new patterns. For the fallible internal photographer, it doesn’t matter whether the spectacle is the expansion of the universe or the fate of a bug:

“So much of the fascinating insect activity around escapes me. No matter how much I see, I miss far more. Under my feet, in front of my eyes, at my very finger’s end, events are transpiring of fascinating interest, if I but knew enough or was fortunate enough to see them. If the world is dull, it is because we are blind and deaf and dumb; because we know too little to sense the drama around us.” [Fabre, cited by Teale, 1959]

* * *

“Science is a social phenomenon… It progresses by hunch, vision, and intuition. Much of its change through time is not a closer approach to absolute truth, but the alteration of cultural contexts that influence it. Facts are not pure information; culture also influences what we see and how we see it. Theories are not inexorable deductions from facts; most rely on imagination, which is cultural.” [Gould, 1981]

In the mid-twentieth century, the arts were dominated by modernism, which emphasized form and technique. Many people thought modernism was excessively restrictive. In reaction, the postmodern movement was born in the 1960’s, embracing a freedom and diversity of styles. Postmodern thinking abandoned the primacy of linear, goal-oriented behavior and adopted a more empathetic, multipath approach that valued diverse ethnic and cultural perspectives. Postmodernism encompassed the social movements of religious and ethnic groups, feminists, and gays, promoting pluralism of personal realities.

According to the postmodern critique, objective truth is a dangerous illusion, developed by a cultural ‘elite’ but sold as a valid multicultural description. Cultural influences are so pervasive that truth, definite knowledge, and objectivity are unobtainable. Consequently, we should qualify all findings by specifying the investigator’s cultural framework, and we should encourage development of multiple alternative perspectives (e.g., feminist, African American, non-Western).

During the last two decades, postmodernism has become the dominant movement of literature, art, philosophy, and history. It has also shaken up some of the social sciences, especially anthropology and sociology. It is, at the moment, nearly unknown among physical scientists. However, some proponents of postmodernism claim that it applies to all sciences. Most postmodernists distrust claims of universality and definite knowledge, and some therefore distrust the goals and products of science.

“The mythology of science asserts that with many different scientists all asking their own questions and evaluating the answers independently, whatever personal bias creeps into their individual answers is cancelled out when the large picture is put together… But since, in fact, they have been predominantly university-trained white males from privileged social backgrounds, the bias has been narrow and the product often reveals more about the investigator than about the subject being researched.” [Hubbard, 1979]

Postmodern literary criticism seeks deconstruction of the cultural and social context of literary works. More broadly, deconstruction analysis is thought to be appropriate for any claim of knowledge, including those of scientists. For example, postmodern anthropologists recognize that many previous interpretations were based on overlaying twentieth-century WASP perspectives onto cultures with totally different worldviews. They reject the quest for universal anthropological generalizations and laws, instead emphasizing the local perspective of a society and of groups within that society [Thomas, 1998].

The major issues for the sciences are those introduced earlier in this chapter. Theories and concepts inspire data collection, determining what kinds of observations are considered to be worthwhile. Resulting observations are theory-laden, in the sense of being inseparable from the theories, concepts, values, and assumptions associated with them. Many values and assumptions are universal (e.g., space and time) and some are nearly so (e.g., causality) and therefore reasonably safe. If, however, some values and assumptions are cultural rather than universal, then associated scientific results are cultural rather than universal. Other scientists with different backgrounds might reach incompatible conclusions that are equally valid.

Bertrand Russell [1927] commented wryly on how a group’s philosophical approach can affect its experimental results:“Animals studied by Americans rush about frantically, with an incredible display of hustle and pep, and at last achieve the desired result by chance. Animals observed by Germans sit still and think, and at last evolve the solution out of their inner consciousness.”

The postmodern critique challenges us to consider the extent to which social constructions may bias our scientific values, assumptions, and even the overall conceptual framework of our own scientific discipline. Many hypotheses and experiments are legitimately subject to more than one interpretation; would a different culture or ethnic group have reached the same conclusion?

Most scientists are likely to conclude that their scientific discipline is robust enough to be unshaken by the postmodern critique. True, observations are theory-laden and concepts are value-laden, but the most critical data and hypotheses are powerful enough to demonstrate explanatory power with greater scope than their origins. Politics and the economics of funding availability undoubtedly affect the pace of progress in the various sciences, but rarely their conclusions. Social influences such as a power elite are capable at most of a temporary disruption of the scientific progress of a science.

In the first section of this chapter, Jarrard’s case-study examples include two football games and a murder. In the previous chapter, Jarrard uses three military quotes, a naval example, an analogy to military strategy and tactics, and two competitive-chess quotes. Clearly, Jarrard is an American male.

Wilford [1992a] offers a disturbing insight into a scientific field that today is questioning its fundamentals. The discipline is anthropology, and many anthropologists wonder how much of the field will survive this self analysis unscathed. The trigger was an apparently innocuous discovery about the Maori legend of colonization of New Zealand, a legend that describes an heroic long-distance migration in seven great canoes. Much of the legend, anthropologists now think, arose from the imaginative interpretation of anthropologists. Yet significantly, the Maoris now believe the legend as part of their culture.

Can anthropologists hope to achieve an objective knowledge of any culture, if that culture’s perceptive and analytical processes are inescapably molded by a different culture? Are they, in seeking to describe a tradition, actually inventing and sometimes imposing one? If cultural traditions are continuously evolving due to internal and external forces, where does the scientist seek objective reality?

Are the anthropologists alone in their plight?

* * *

“The nature of scientific method is such that one must suppress one’s hopes and wishes, and at some stages even one’s intuition. In fact the distrust of self takes the form of setting traps to expose one’s own fallacies.” [Baker, 1970]

How can we reconcile the profound success of science with the conclusion that the perception process makes objectivity an unobtainable ideal? Apparently, science depends less on complete objectivity than most of us imagine. Perhaps we do use a biased balance to weigh and evaluate data. All balances are biased, but those who are aware of the limitations can use them effectively. To improve the accuracy of a balance, we must know its sources of error.

Pitfalls of subjectivity abound. We can find them in experimental designs, execution of experiments, data interpretations, and publications. Some can be avoided entirely; some can only be reduced.

• ignoring relevant variables: Some variables are ignored because of sloppiness. For example, many experimental designs ignore instrument drift, even though its bias can be removed. Often, however, intentions are commendable but psychology intervenes.

1) We tend to ignore those variables that we consider irrelevant, even if other scientists have suggested that these variables are significant.

2) We ignore variables if we know of no way to remove them, because considering them forces us to admit that the experiment has ambiguities.

3) If two variables may be responsible for an effect, we concentrate on the dominant one and ignore the other.

4) If the influence of a dominant variable must be removed, we are likely to ignore ways of removing it completely. We unconsciously let it exert at least residual effects [Kuhn et al., 1988].

• confirmation bias:

1) During the literature review that precedes experiment, we may preferentially seek and find evidence that confirms our beliefs or preferred hypothesis.

2) We select the experiment most likely to support our beliefs. This insidiously frequent pitfall allows us to maintain the illusion of objectivity (for us as well as for others) by carrying out a rigorous experiment, while nevertheless obtaining a result that is comfortably consistent with expectations and desires.

This approach can hurt the individual more than the scientific community. When two conflicting schools of thought each generate supporting information, the battling individuals simply grow more polarized, yet the community may weigh the conflicting evidence more objectively. Individuals seeking to confirm their hypothesis may overlook ways of refuting it, but a skeptical scientific community is less likely to make that mistake.

• biased sampling: Subjective sampling that unconsciously favors the desired outcome is easily avoided by randomization. Too often, however, we fail to consider the relevance of this problem during experimental design, when countermeasures are still available.

• wish-fulfilling assumption: In conceiving an experiment, we may realize that it could be valuable and diagnostic if a certain assumption were valid or if a certain variable could be controlled. Strong desire for an obstacle to disappear tempts us to conclude that it is not really an obstacle.

• biased abortive measurements: Sometimes a routine measurement may be aborted. Such data are rejected because of our subjective decision that a distraction or an intrusion by an uncontrolled variable has adversely affected that measurement’s reliability. If we are monitoring the measurement results, then our data expectations can influence the decision to abort or continue a measurement. Aborted measurements are seldom mentioned in publications, because they weaken reader confidence in the experiment (and maybe even in the experimenter).

The biasing effect can be reduced in several ways: (1) ‘blind’ measurements, during which we are unaware of the data’s consistency or inconsistency with the tested hypothesis; (2) a priori selection of criteria for aborting a measurement; and (3) completion of all measurements, followed by discussion in the publication of the rationale for rejecting some.

• biased rejection of measurements: Unanticipated factors and uncontrolled variables can intrude on an experiment, potentially affecting the reliability of associated data. Data rejection is one solution. Many data-rejection decisions are influenced by expectations concerning what the data ‘should be’.

Rejection may occur as soon as the measurement is completed or in the analysis stage. As with aborted data, rejected measurements should be, but seldom are, mentioned in publication. Data-rejection bias is avoidable, with the same precautions as those listed above for reducing bias from aborted measurements.

• biased mistakes: People make mistakes, and elaborate error checking can reduce but not totally eliminate mistaken observations. Particularly in the field of parapsychology where subtle statistical effects are being detected (e.g., card guessing tests for extrasensory perception, or ESP), much research has investigated the phenomenon of ‘motivated scoring errors.’ Scoring hits or misses on such tests appears to be objective: either the guess matched the card or it did not. Mistakes, however, are more subjective and biased: believers in ESP tend to record a miss as a hit, and nonbelievers tend to score hits as misses.

Parapsychology experimenters long ago adapted experimental design by creating blinds to prevent motivated scoring errors, but researchers in most other fields are unaware of or unworried by the problem. Motivated scoring errors are subconscious, not deliberate. Most scientists would be offended by the suggestion that they were vulnerable to such mistakes, but you and I have made and will make the following subconsciously biasing mistakes:

1) errors in matching empirical results to predictions,

2) errors in listing and copying results,

3) accidental omissions of data, and

4) mistakes in calculations.

• missing the unexpected: Even ‘obvious’ features can be missed if they are unexpected. The flash-card experiment, discussed earlier in this chapter, was a memorable example of this pitfall. Unexpected results can be superior to expected ones: they can lead to insight and discovery of major new phenomena (Chapter 8). Some common oversights are: (1) failing to notice disparate results among a mass of familiar results; (2) seeing but rationalizing unexpected results; and (3) recording but failing to follow-up or publish unexpected results.

• biased checking of results: To avoid mistakes, we normally check some calculations and experimental results. To the extent that it is feasible, we try to check all calculations and tabulations, but in practice we cannot repeat every step. Many researchers selectively check only those results that are anomalous in some way; such data presumably are more likely to contain an error than are results that look OK. The reasoning is valid, but we must recognize that this biased checking imparts a tendency to obtain results that fulfill expectations. If we perform many experiments and seldom make mistakes, the bias is minor. For a complex set of calculations that could be affected substantially by a single mistake, however, we must beware the tendency to let the final answer influence the decision whether or not to check the calculations. Biased checking of results is closely related to the two preceding pitfalls of making motivated mistakes and missing the unexpected.

• missing important ‘background’ characteristics: Experiments can be affected by a bias of human senses, which are more sensitive to detecting change than to noticing constant detail [Beveridge, 1955]. In the midst of adjusting an independent variable and recording responses of a dependent variable, it is easy to miss subtle changes in yet another variable or to miss a constant source of bias. Exploitation of this pitfall is the key to many magicians’ tricks; they call it misdirection. Our concern is not misdirection but perception bias. Einstein [1879-1955] said, “Raffiniert is der Herrgott, aber boshaft ist er nicht” (“God is subtle, but he is not malicious”), meaning that nature’s secrets are concealed through subtlety rather than trickery.

• placebo effect: When human subjects are involved (e.g., psychology, sociology, and some biology experiments), their responses can reflect their expectations. For example, if given a placebo (a pill containing no medicine), some subjects in medical experiments show a real, measurable improvement in their medical problems due to their expectation that this ‘medicine’ is beneficial. Many scientists avoid consideration of mind/body interactions; scientific recognition of the placebo effect is an exception. This pitfall is familiar to nearly all scientists who use human subjects. It is avoidable through the use of a blind: the experimenter who interacts with the subject does not know whether the subject is receiving medicine or placebo.

• subconscious signaling: We can influence an experimental subject’s response involuntarily, through subconsciously signaling. As with the placebo effect, this pitfall is avoidable through the use of blinds.

• confirmation bias in data interpretation: Data interpretation is subjective, and it can be dominated by prior belief. We should separate the interpretation of new data from the comparison of these data to prior results. Most publications do attempt to distinguish data interpretation from reconciliation with previous results. Often, however, the boundary is fuzzy, and we bias the immediate data interpretation in favor of our expectations from previous data.

• hidden control of prior theories on conclusions: Ideally, we should compare old and new data face-to-face, but too often we simply recall the conclusions based on previous experiments. Consequently, we may not realize how little chance we are giving a new result to displace our prior theories and conclusions. This problem is considered in more detail in that part of the next chapter devoted to paradigms.

• biased evaluation of subjective data: Prior theories always influence our evaluation of subjective data, even if we are alert to this bias and try to be objective. We can avoid this pitfall through an experimental method that uses a blind: the person rating the subjective data does not know whether the data are from a control or test group, or what the relationship is of each datum to the variable of interest. However, researchers in most disciplines never even think of using a blind; nor can we use a blind when evaluating published studies by others.

• changing standards of interpretation: Subjectivity permits us to change standards within a dataset or between datasets, to exclude data that are inconsistent with our prior beliefs while including data that are more dubious but consistent with our expectations [Gould, 1981]. A similar phenomenon is the overestimation of correlation quality when one expects a correlation and underestimation of correlation quality when no correlation is expected [Kuhn et al., 1988].

• language bias: We may use different words to describe the same experimental result, to minimize or maximize its importance (e.g., ‘somewhat larger’ vs. ‘substantially larger’). Sarcasm and ridicule should have no place in a scientific article; they undermine data or interpretations in a manner that obscures the actual strengths and weaknesses of evidence.

• advocacy masquerading as objectivity: We may appear to be objective in our interpretations, while actually letting them be strongly influenced by prior theories. Gould [1981], who invented this expression, both criticizes and falls victim to this pitfall.

• weighting one’s own data preferentially: This problem is universal. We know the strengths and weaknesses of personally-obtained data much better than those of other published results. Or so we rationalize our preference for our own data. Yet both ego and self-esteem play a role in the frequent subjective decision to state in print that one’s own evidence supersedes conflicting evidence of others.

• failure to publish negative results: Many experimental results are never published. Perhaps the results are humdrum or the experimental design is flawed, but often we fail to publish simply because the results are negative: we do not understand them, they fail to produce a predicted pattern, or they are otherwise inconsistent with expectations. If we submit negative results for publication, the manuscript is likely to be rejected because of unfavorable reviews (‘not significant’). I have even heard of a journal deliberately introducing this bias by announcing that they will not accept negative results for publication. Yet a diagnostic demonstration of negative results can be extremely useful -- it can force us to change our theories.

• concealing the pitfalls above: The myth of objectivity usually compels us to conceal evidence that our experiment is subject to any of the pitfalls above. Perhaps we make a conscious decision not to bog down the publication with subjective ambiguities. More likely, we are unaware or only peripherally cognizant of the pitfalls. Social scientists recognize the difficulty of completely avoiding influence of the researcher’s values on a result. Therefore they often use a twofold approach: try to minimize bias, and also specifically spell out one’s values in the publication, so that the reader can judge success.

* * *

“The great investigator is primarily and preeminently the man who is rich in hypotheses. In the plenitude of his wealth he can spare the weaklings without regret; and having many from which to select, his mind maintains a judicial attitude. The man who can produce but one, cherishes and champions that one as his own, and is blind to its faults. With such men, the testing of alternative hypotheses is accomplished only through controversy. Crucial observations are warped by prejudice, and the triumph of the truth is delayed.” [Gilbert, 1886]

* * *

Penzias and Wilson [1965] discovered the background radiation of the universe by accident. When their horn antenna detected this signal that was inconsistent with prevailing theories, their first reaction was that their instrument somehow was generating noise. They cleaned it, dismantled it, changed out parts, but they were still unable to prevent their instrument from detecting this apparent background radiation. Finally they were forced to conclude that they had discovered a real effect.

Pitfalls: biased checking of results;

biased rejection

of measurements;

Throughout the 20th century, scientists from many countries have sought techniques for successfully predicting earthquakes. In 1900 Imamura predicted that a major earthquake would hit Tokyo, and for two decades he campaigned unsuccessfully to persuade people to prepare. In 1923, 160,000 people died in the Tokyo earthquake. Many predictions have been made since then, by various scientists, based on diverse techniques. Still we lack reliable techniques for earthquake prediction. Said Lucy Jones [1990] of the U.S. Geological Survey, “When people want something too much, it’s very easy to overestimate what you’ve got.” Most of the altruistic predictions suffered from one of the following pitfalls:

wish-fulfilling assumption or treatment of a variable;

biased sampling; or

confirmation bias in data interpretation.

* * *

The following examples were used by Gould [1981] to illustrate the severe societal damage that lapses in scientific objectivity can inflict.

If an objective, quantitative measure of intelligence quotient (IQ), independent of environment, could be found, then education and training possibly could be optimized by tailoring them to this innate ability. This rationale was responsible for development of the Army Mental Tests, which were used on World War I draftees. Among the results of these tests were the observations that white immigrants scored lower than white native-born subjects, and immigrant scores showed a strong correlation with the number of years since immigration. The obvious explanation for these observations is that the tests retained some cultural and language biases. The actual interpretation, which was controlled by desire for the tests to be objective measures of IQ, was the following: a combination of lower intelligence in Europeans than in Americans and of declining intelligence of immigrants. This faulty reasoning was used in establishing the 1924 Immigration Restriction Act. [Gould, 1981]

Pitfalls: wish-fulfilling assumption or treatment of variable;

ignoring relevant variables;

hidden control of prior theories on conclusions.

Bean ‘proved’ black inferiority by measuring brain volumes of blacks and whites and demonstrating statistically that black brains are smaller than white brains. His mentor Mall replicated the experiment, however, and found no significant difference in average brain size. The discrepancy of results is attributable to Mall’s use of a blind: at the time of measurement, he had no clues as to whether the brain he was measuring came from a black or white person. Bean’s many measurements had simply reflected his expectations. [Gould, 1981]

Pitfalls: biased evaluation of subjective data;

advocacy

masquerading as objectivity.

In order to demonstrate that blacks are more closely related to apes than whites are, Paul Broca examined a wide variety of anatomical characteristics, found those showing the desired correlation, and then made a large number of careful and reliable measurements of only those characteristics. [Gould, 1981]

Pitfalls: confirmation bias in experimental design

(selecting the experiment most likely to support one’s beliefs);

confirmation bias in data interpretation;

hidden control or prior theories on conclusions;

advocacy masquerading

as objectivity.

* * *

“The objectivity of science is not a matter of the individual scientists but rather the social result of their mutual criticism.” [Popper, 1976]

“Creating a scenario may be best done inside a single head; trying to find exceptions to the scenario is surely best done by many heads.” [Calvin, 1986]

One can reduce deliberately the influence of the pitfalls above on one’s research. One cannot eliminate the effects of personal involvement and personal opinions, nor is it desirable to do so. Reliability of conclusions, not objectivity, is the final goal. Objectivity simply assists us in obtaining an accurate conclusion. Subjectivity is essential to the advance of science, because scientific conclusions are seldom purely deductive; usually they must be evaluated subjectively in the light of other knowledge.

But what of experimenter bias? Given the success of science in spite of such partiality, can it actually be a positive force in science? Or does science have internal checks and balances to reduce the adverse effects of bias? Several philosophers of science [e.g., Popper, 1976; Mannoia, 1980; Boyd, 1985] argue that a scientific community can make objective consensus decisions in spite of the biases of individual proponents.

It’s said [e.g., Beveridge, 1955] that only the creator of a hypothesis believes it (others are dubious), yet only the experimenter doubts his experiment (others cannot know all of the experimental uncertainties). Of course, group evaluation is much less credible and unanimous than this generalization implies. The point, instead, is that the individual scientist and the scientific community have markedly different perspectives.

Replication is one key to the power of group objectivity. Replicatability is expected for all experimental results: it should be possible for other scientists to repeat the experiment and obtain similar results. Published descriptions of experimental technique need to be complete enough to permit that replication. As discussed in Chapter 2, follow-up studies by other investigators usually go beyond the original, often by increasing precision or by isolating variables. Exact replication of the initial experiment seldom is attempted, unless those original experimental results conflict with prior concepts or with later experiments. Individual lapses of objectivity are likely to be detected by the variety of perspectives, assumptions, and experimental techniques employed by the scientific community.

Perhaps science is much more objective than individual scientists, in the same way that American politics is more objective than either of the two political parties or the individual politicians within those parties. Politicians are infamous for harboring bias toward their own special interests, yet no doubt they seek benefits for their constituents more often than they pursue personal power or glory. Indeed, concern with personal power or glory is more relevant to scientists than we like to admit (Chapter 9).

The strength of the political party system is that two conflicting views are advocated by two groups, each trying to explain the premises, logic, and strengths of one perspective and the weaknesses of the other point of view. If one pays attention to both viewpoints, then hopefully one has all of the information needed for a reliable decision. Conflicting evidence confronts people with the necessity of personally evaluating evidence. Unfortunately, both viewpoints are not fully developed in the same place; we must actively seek the conflicting arguments.

Suppose a scientist writes a paper that has repeated ambivalent statements such as “X may be true, as indicated by Y and Z; on the other hand, …” That scientist may be objective, but the paper probably has little impact, because it leaves most readers with the impression that the subject is a morass of conflicting, irreconcilable evidence. Only a few readers will take the time to evaluate the conflicting evidence.

Now suppose that one scientist writes a paper saying, “X, not Y, is probable because…” and another scientist counters with “Y, not X, is probable because…” Clearly, the reader is challenged to evaluate these viewpoints and reach a personal conclusion. This dynamic opposition may generate a healthy and active debate plus subsequent research. “All things come into being and pass away through strife” [Heraclitus, ~550-475 B.C.]. Science gains, and the only losers are the advocates of the minority view. Even they lose little prestige, because their role is remembered more for its active involvement in a fascinating problem than for being ‘wrong’, if the losers show in print that they have changed their minds because of more convincing evidence. In contrast, the loser who continues as a voice in the wilderness does lose credibility (even if he or she is right).

“It is not enough to observe, experiment, theorize, calculate and communicate; we must also argue, criticize, debate, expound, summarize, and otherwise transform the information that we have obtained individually into reliable, well established, public knowledge.” [Ziman, 1969]

Given two contradictory datasets or theories (e.g., light as waves vs. particles), the scientific community gains if some scientists simply assume each and then pursue its ramifications. This incremental work eventually may offer a reconciliation or solution of the original conflict. Temporary abandonment of objectivity thus can promote progress.

Science is not democratic. Often a lone dissenter sways the opinions of the scientific community. The only compulsion to follow the majority view is peer pressure, which we first discovered in elementary school and which haunts us the rest of our lives.

A consensus evolves from conflicting individual views most readily if the debating scientists have similar backgrounds. Divergent scientific backgrounds cause divergent expectations, substantially delaying evolution of a consensus. For example, geology has gone through prolonged polarizations of views between Northern Hemisphere and Southern Hemisphere geologists on the questions of continental drift and the origin of granites. In both cases, the locally observable geologic examples were more convincing to a community than were arguments based on geographically remote examples.

This heterogeneity of perspectives and objectives is an asset to science, in spite of delayed consensus. It promotes group objectivity and improves error-checking of ideas. In contrast, groups that are isolated, homogeneous, or hierarchical tend to have similar perspectives. For example, Soviet science has lagged Western science in several fields, due partly to isolation and partly to a hierarchy that discouraged challenging of the leaders’ opinions.

* * *

The cold-fusion fiasco is an excellent example of the robustness of group objectivity, in contrast to individual subjectivity. In 1989 Stanley Pons and Martin Fleischmann announced that they had produced nuclear fusion in a test tube under ordinary laboratory conditions. The announcement was premature: they had not rigorously isolated variables and thoroughly explored the phenomenon. The rush to public announcement, which did not even wait for simultaneous presentation to peers at a scientific meeting, was generated by several factors: the staggering possible benefit to humanity of cheap nuclear power, the Nobel-level accolades that would accrue to the discoverers, the fear that another group might scoop them, executive decisions by the man who was president of my university, and exuberance.

The announcement ignited a fire-storm of attempts to replicate the experiments. Very few of those attempts succeeded. Pons and Fleischmann were accused of multiple lapses of objectivity: wish-fulfilling assumptions, confirmation bias, ignoring relevant variables, mistakes, missing important background characteristics, and optimistic interpretation.

In a remarkably short time, the scientific community had explored and discredited cold fusion. Group objectivity had triumphed: fellow scientists had shown that they were willing to entertain a theoretically ridiculous hypothesis and subject it to a suite of experimental tests.

Groups can, of course, temporarily succumb to the same objectivity lapses as individuals. N rays are an example.

Not long after Roentgen’s discovery of X rays, Rene Blondlot published a related discovery: N rays, generated in substances such as heated metals and gases, refracted by aluminum prisms, and observed by phosphorescent detectors. If one sees what one expects to see, sometimes many can do the same. The enthusiastic exploration of N rays quickly led to dozens of publications on their ‘observed’ properties. Eventually, of course, failures to replicate led to more rigorous experiments and then to abandonment of the concept of N rays. The scientific community moved on, but Blondlot died still believing in his discovery.

We began this section with a paradox: how can it be possible for many subjective scientists to achieve objective knowledge? We concluded that science does have checks and balances that permit it to be much more objective than the individual scientists. The process is imperfect: groups are temporarily subject to the same subjectivity as individuals. Group ‘objectivity’ also has its own pitfalls. We shall postpone consideration of those pitfalls until the next chapter, however, so that we can see them from the perspective of Thomas Kuhn’s remarkable insights into scientific paradigm.